You Need Influence, Not Influencers

We built an AI actor that hit 1M views in a month — then we built the tool that makes them.

March 2, 2026

The way TikTok works is that videos need to pass through gates before the algorithm will show them to more people. The bands seem to be around 2,000, 5,000, and 10,000 views. Once a video crosses 10k, TikTok deems it eligible to go viral. Below that, you’re auditioning. Above it, you’re performing.

The night our first AI actor hit 100,000 views, the next best video on the account had 11,000. That gap — 100k to 11k — meant we’d cracked something. Not just a video that got lucky, but a formula that worked. When we did it again with a second actor, what could’ve been dismissed as a fluke turned into a pattern.

Both actors were AI-generated. Built from scratch. Neither one existed a few months earlier.

That pattern changed how Josh and I thought about marketing entirely. We’d been doing what every small team does: building great products and then staring at the distribution problem like it was somebody else’s job. We’d tried the influencer route. Scheduling was a nightmare, scripts needed constant coordination, and no two creators were consistent enough to feel like a brand. We didn’t need influencers. We needed influence we could control.

So we built an AI actor. Then we built the tool that makes them.

The Consistency Problem Nobody Else Had Solved

Here’s what the existing AI avatar tools got wrong: they couldn’t keep a face. You’d generate a character, make a great video, then generate the next one and she’d look like a different person. Every competitor we tested had the same problem. Your “consistent character” would drift across generations until followers noticed something was off.

This is the thing that kills AI-generated content before it starts. Social media runs on recognition. If your character looks slightly different every time, your audience’s trust erodes at the subconscious level, even if they can’t articulate why.

We didn’t need influencers. We needed influence we could control.

We solved it by building a reference system that locks a character’s appearance across generations. Generate once, extract what makes the character recognizable, then use that as a constraint for every future video. The character stays the character — across videos, across scenarios, across weeks of content. It sounds simple when I describe it, but getting there meant cycling through models that crashed, zoomed incorrectly, or produced compositions that looked like they were rendered by someone who’d never seen a human face.

The Model Graveyard

I won’t pretend we had a clean path to the right tech stack. We didn’t.

We cycled through more video generation models than I can count. Some were expensive and unstable — crashing under load with quality that wasn’t worth the cost. Others couldn’t frame a shot correctly or composed images that looked like they were rendered by someone who’d never seen a human face. Each failed model taught us what actually mattered (consistency, cost, speed) and what we could live without (perfect lighting, cinematic depth of field).

The breakthrough wasn’t any single model. It was building a pipeline that prioritized character consistency above everything else — so we could swap models underneath as better ones emerged without losing what made the actors recognizable. By the time we had the full pipeline dialed, we’d cut our per-video generation cost by about 80% compared to where we started. And the output was better.

Treating AI Like a Film Set

The mental model that made Ovii click was borrowed from film production, not from software.

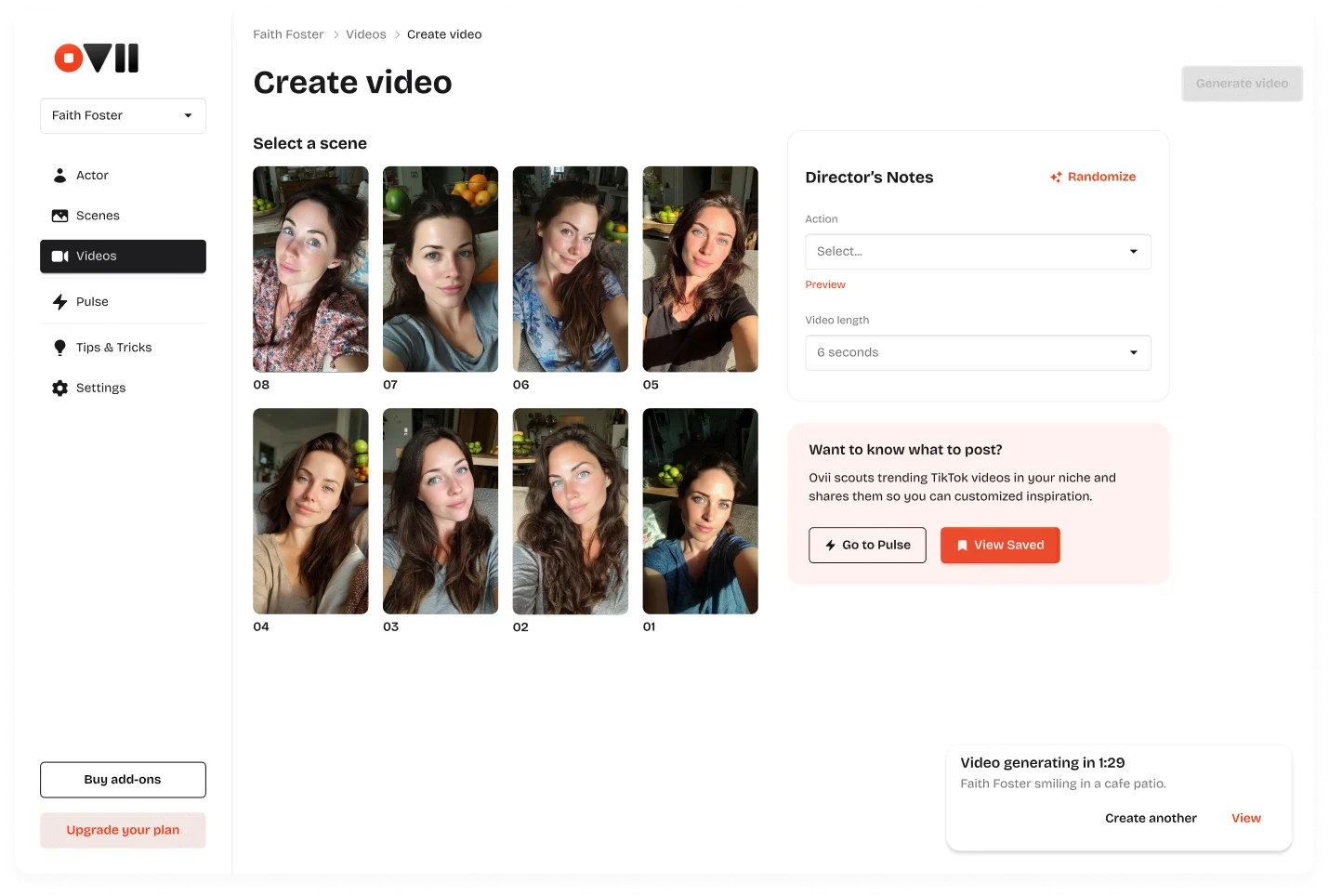

Creating an actor is casting. Creating a scene is shooting. Hitting record is the final step. That framing shaped every product decision — the dashboard is organized around individual actors (like an IMDb profile, not a generation queue), scenes are built with environment and pose in mind, and the workflow moves from character to context to content.

Creating an actor is casting. Creating a scene is shooting. Hitting record is the final step.

This sounds like a design philosophy, but it had real technical consequences. When we realized that avatars aren’t just characters — they’re first video frames — everything about pose-specific generation changed. A selfie-style video needs a selfie POV reference. A seated video needs a seated reference. You can’t use a standing character reference for a walking video and expect it to look right. So we built the system to generate the character in the appropriate pose based on the use case the creator selects. It eliminated the most common failure mode before the user ever hit generate.

The First Real Users

The numbers from our own actors were the proof of concept. One actor hit a million views and eight thousand followers in thirty days. A second actor — built to promote a dating profile photo app called Fieldtrip — generated a single dating advice video about being open to new experiences and people that crossed 1.5 million views within two months. But proof of concept is one thing. Other people using your tool is another.

Our first paying customer was a founder building scheduling software for care agencies. We’d expected people to use Ovii for the obvious play — slick product promos with attractive AI faces. Instead, this person wanted to create an AI actor positioned as a care industry expert. Not a fake business owner, not a product shill — a character that could lead with value content and weave in product mentions naturally. It reframed how we thought about the tool entirely. Ovii wasn’t just for vanity UGC. It was for building a content strategy around a character that doesn’t need to be managed, scheduled, or paid residuals.

What Broke Along the Way

Laughing didn’t work. We’d built a set of pre-defined animations — smirking, looking around, glancing left and right — and “laughing” was supposed to be one of them. The output was consistently uncanny. We pulled it from the options entirely.

Walking animations clipped through furniture in indoor scenes. Kitchen environments rendered inconsistently across different actors. The actor-switching experience was sluggish because it triggered a full page refresh instead of a component-level update.

None of this was fatal, but all of it was the kind of thing that turns a promising tool into one that people try once and don’t come back to. We fixed each of these through user feedback — particularly from a power user who’d generated thirty videos and surfaced every edge case we hadn’t caught ourselves. That same testing process is how we landed on eight seconds as the optimal video length. Six seconds felt rushed — the motion didn’t have time to settle into something natural. Eight seconds gave the character enough room to move, shift expression, and feel like a person rather than an animation.

The Business That Emerged

We started with Ovii as an internal tool — something we built because we needed it for our own apps. It took four months to get from “we should make this a product” to a version that was professional and reliable enough for other builders to pay for. During those same four months, we shipped three other consumer iOS apps. That’s the reality of building as a two-person team — nothing gets your full attention, and everything ships anyway.

We landed on a tier system — Attract, Grow, Scale, and Enterprise — where each tier gives you a set number of actors, scenes, and videos. Simple enough for users to understand immediately, and structured so that the limits scale with how seriously you’re using the tool.

The validation that mattered most wasn’t our own results. It was the inbound. A skincare app with eight thousand ratings and a 4.9-star average reached out to us directly for UGC content. Enterprise marketing agencies started asking about avatar testing — using Ovii to prototype campaigns before committing budget to real creators. That’s the market signal that’s hard to manufacture: people finding you and telling you what they want to pay for.

The market signal that’s hard to manufacture is people finding you and telling you what they want to pay for.

Where Ovii Goes From Here

Voice integration is next — lip sync so the actors can actually speak. Accent controls for global markets. Further upstream, we’re building out niche identification and competitor analysis so the tool can help you figure out what kind of actor to create, not just create one. Further downstream, music integration, text overlays, and trend-aware content suggestions.

But the thing I keep coming back to isn’t a feature. It’s the reaction from people who see the videos and don’t realize they’re AI. Not because we’re trying to deceive anyone — the accounts don’t pretend to be human. It’s that the quality has crossed the threshold where the content just works. People engage with it the way they engage with anything else in their feed. And for a two-person team trying to grow multiple apps without a marketing department, that’s the whole game.

Ovii is live. If you’re a small team trying to grow without a marketing department, this is how we do it.